The White House released its National AI Policy Framework recommending that Congress preempt state AI laws in favor of a single minimally restrictive federal standard.

White House National AI Policy Framework Pushes Congress to Preempt State AI Laws

By Hector Herrera | April 19, 2026 | Government

The White House released its National Policy Framework for Artificial Intelligence on March 20, 2026, recommending that Congress establish a single minimally restrictive federal standard for AI and preempt state laws it considers an "undue burden." The framework explicitly rejects creating any new federal regulatory body for AI. Bipartisan opposition has already emerged, signaling that the fight over who governs American AI is far from settled.

What Happened

The White House released its National Policy Framework for Artificial Intelligence on March 20, 2026. The framework is a non-binding policy document—not a law or executive order—but it represents the clearest statement yet of the administration's position on how AI should be regulated in the United States.

The core recommendation: Congress should pass legislation preempting state AI laws that "impose undue burdens" on AI development or deployment, replacing the current patchwork of state-level rules with a single national standard. That standard would be governed through existing federal agencies—the FTC, FDA, CFPB, and others—rather than a new AI-specific regulator, which the framework explicitly rejects.

The framework's philosophical premise is that permissive, innovation-friendly federal rules will produce better outcomes than restrictive state-level approaches that vary by jurisdiction.

Context

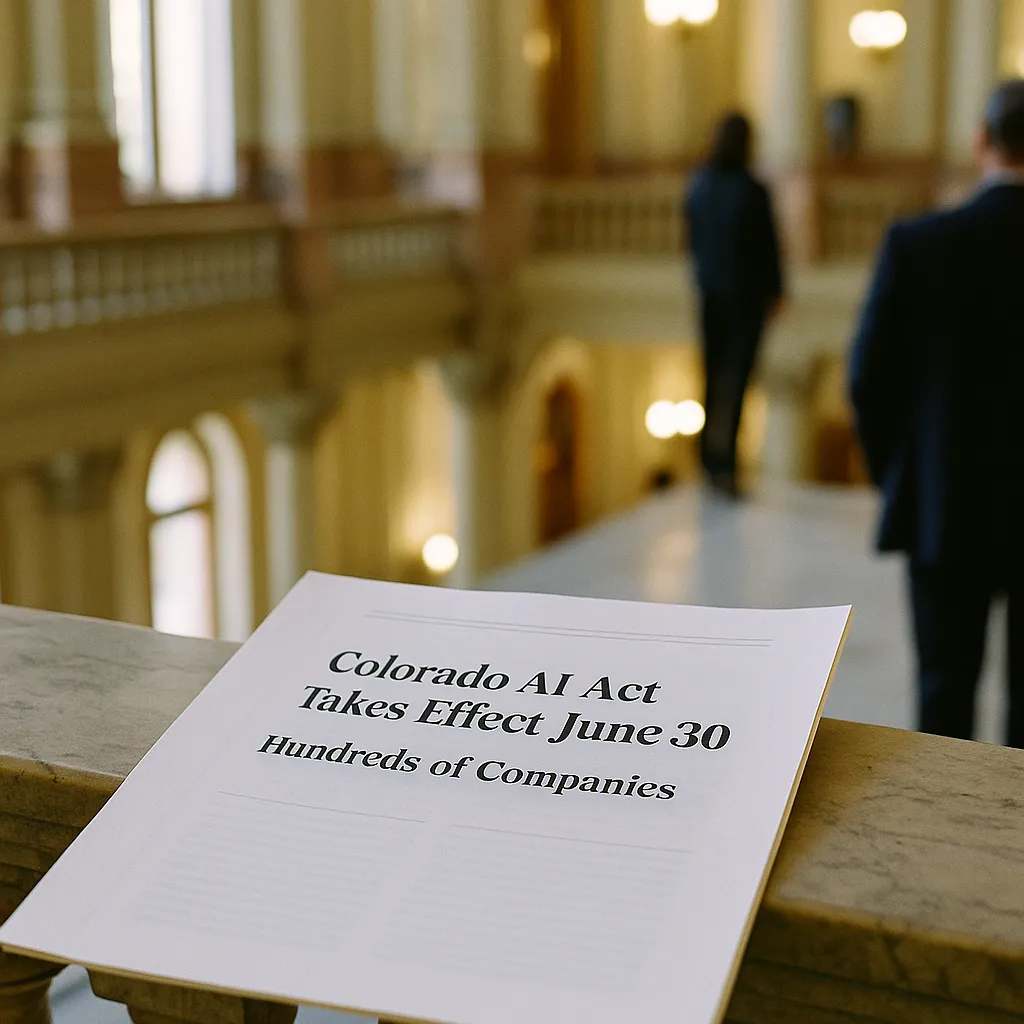

The United States currently has no comprehensive federal AI law. Into that gap, states have moved aggressively. California, Colorado, Texas, Illinois, New York, and others have each passed or are in the process of passing AI-specific legislation, covering everything from automated decision-making to biometric data to algorithmic hiring tools.

This state-by-state approach creates real compliance complexity for companies operating nationally. A financial services firm using AI in credit decisions faces different disclosure requirements in California than in Texas. A healthcare company deploying diagnostic AI faces different standards in Illinois than in Colorado. The compliance cost is real, and it falls heaviest on smaller companies without dedicated legal teams.

Get this in your inbox.

Daily AI intelligence. Free. No spam.

The administration's framework reflects the position—advocated forcefully by major technology companies—that federal preemption would reduce this burden and allow American AI development to move faster than in jurisdictions with more restrictive approaches, including the European Union.

Details

- Released: March 20, 2026

- Source: White House Office of Science and Technology Policy

- Key recommendation: Congressional legislation to preempt state AI laws deemed an "undue burden"

- Governance approach: Through existing federal agencies (FTC, FDA, CFPB, etc.), not a new AI regulator

- Opposition: The GUARDRAILS Act, introduced by House Democrats, seeks to repeal the administration's AI executive order

- State tension: California Governor Newsom simultaneously ordered state agencies to assess AI harm in procurement decisions—a direct counter-signal to federal preemption efforts

The framework does not define "undue burden" precisely, which is the critical ambiguity that will determine how much state law actually survives if Congress acts on the recommendation.

Impact

For AI companies: Federal preemption would consolidate compliance obligations and—depending on how permissive the federal standard is—could reduce restrictions on AI deployment across the country. This is the outcome the technology industry has been lobbying for. The risk is that the federal standard gets negotiated upward by Congress toward more restrictive provisions than the framework envisions.

For states: If Congress preempts state AI law, California, Colorado, Texas, and other states with active AI regulation lose their authority to govern AI systems operating within their borders—even in state-specific contexts like state government contracts and local labor markets. This is a significant reduction in state sovereignty and is why bipartisan opposition has emerged: conservatives who support state authority and progressives who want stronger AI consumer protections both have reasons to oppose blanket preemption.

For consumers: A permissive federal standard with weak preemption of state protections is the worst-case scenario for consumers. The states that have enacted the strongest AI consumer protections—disclosure requirements, audit rights, appeal mechanisms—would see those protections effectively voided. The administration's framework does not address this risk directly.

For the global regulatory picture: The U.S. approach is the opposite of the EU AI Act, which is risk-tiered, comprehensive, and restrictive. If the U.S. adopts a minimally restrictive federal standard, global companies will face genuinely divergent regulatory environments and will have to decide which market's rules to build toward.

For legal teams: The current state of AI law in the U.S. is in flux—which means compliance decisions made today may need to be revised as the federal-state preemption question resolves. Companies with significant AI deployments should not assume the current state framework will remain stable. Document your compliance reasoning, not just your compliance actions.

What to Watch

The GUARDRAILS Act represents the first concrete legislative opposition to the administration's AI policy direction. Watch whether it gains co-sponsors beyond the initial House Democrat group—Republican support would signal a genuinely bipartisan concern that the administration is moving too fast on deregulation. Also watch California: if Governor Newsom's executive order on AI harm in procurement survives a legal challenge, it demonstrates that states can enforce AI accountability requirements even under federal preemption pressure, at least within their own government operations.

Hector Herrera covers government and AI for NexChron.

Did this help you understand AI better?

Your feedback helps us write more useful content.

Get tomorrow's AI briefing

Join readers who start their day with NexChron. Free, daily, no spam.