Chinese AI lab DeepSeek released its most capable model yet, roughly one year after its original launch rattled global AI markets — and the efficiency debate is back.

DeepSeek Unveils Its Most Powerful Flagship Model Yet, One Year After Its Market-Shaking Debut

By Hector Herrera | May 4, 2026 | Vertical: News | Type: Breaking News

Chinese AI lab DeepSeek has released its newest flagship model, roughly one year after its original launch rattled global AI markets by demonstrating frontier-level capabilities at a fraction of the compute cost that American labs were spending. The new release is expected to reignite a central argument in AI: that the efficiency gap between Chinese and American labs is narrowing fast, and that the capital spending race may not determine who wins.

DeepSeek's original model, released in early 2025, was a watershed moment. It showed that a relatively small Chinese research lab could match or exceed the performance of models from OpenAI and Google on standard benchmarks — using training runs that cost a fraction of what U.S. labs were spending. That revelation sent Nvidia's stock into a single-day drop of nearly $600 billion in market cap and forced every major AI lab to reassess its efficiency assumptions.

What DeepSeek Released

According to Bloomberg, the new model is DeepSeek's most capable yet. Details on the architecture and training cost remain limited at this stage, but the lab has consistently published technical reports that reveal efficiency innovations — a pattern that distinguishes it from most American labs, which treat training details as proprietary.

The model arrives as DeepSeek has maintained an open-weights release strategy (meaning the model weights are publicly available for download and inspection) — a deliberate contrast to OpenAI's GPT-4 and Anthropic's Claude series, which are accessible only via API. That openness has made DeepSeek models popular with researchers and enterprises that want to run AI on their own infrastructure without per-query costs.

Get this in your inbox.

Daily AI intelligence. Free. No spam.

Why It Matters Now

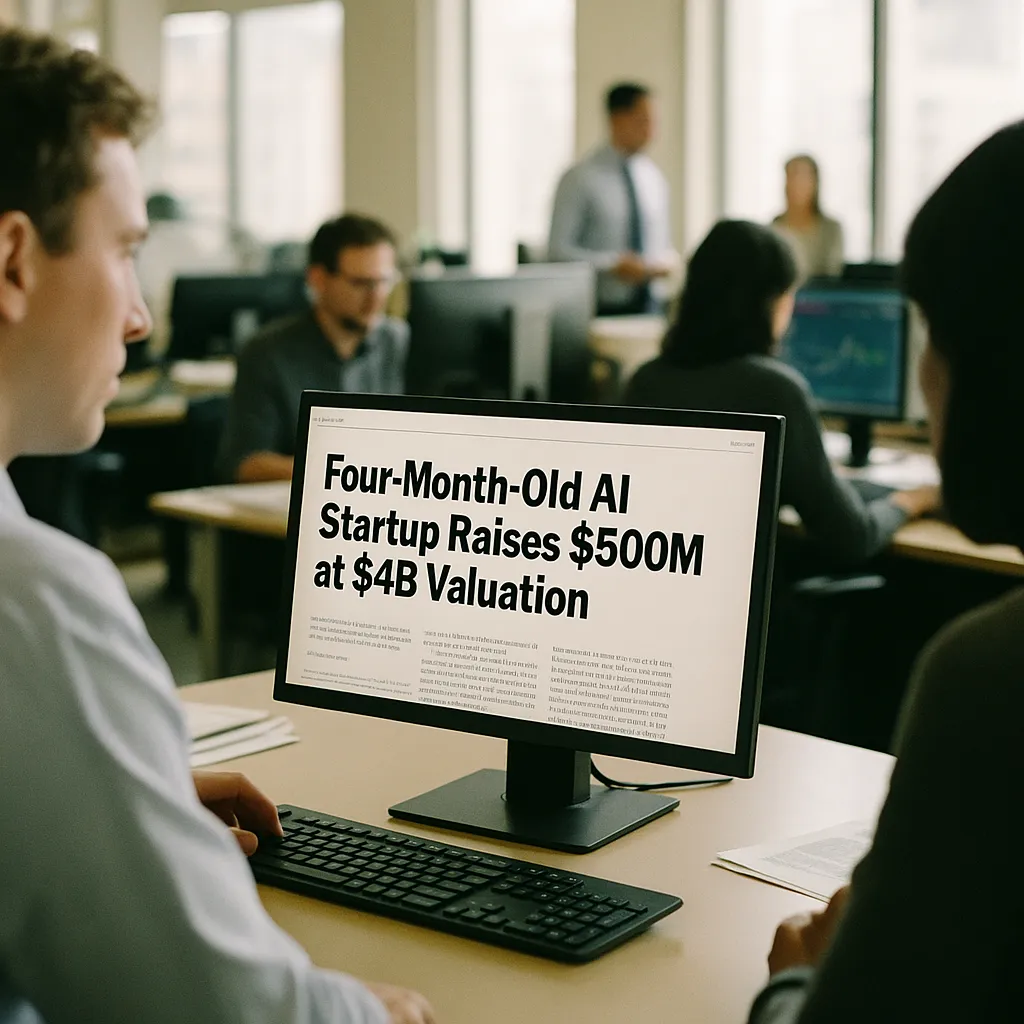

The timing is significant. U.S. AI labs have spent the past year justifying trillion-dollar capital investment projections to investors — Microsoft alone committed $80 billion to AI infrastructure in 2025. Those projections depend on the premise that frontier AI requires massive compute, and that only players with deep access to Nvidia GPU clusters can compete at the top.

DeepSeek's efficiency track record directly challenges that premise.

If the new model again demonstrates frontier performance at low training cost, it strengthens the case that:

- Open-source models can match proprietary ones on many real-world tasks

- American capital expenditure assumptions may be inflated

- Geopolitical export controls on AI chips are insufficient to prevent competitive parity

U.S. export restrictions on Nvidia's most advanced chips — the H100 and H200 — were designed partly to slow Chinese AI development. DeepSeek's original 2025 model was reportedly trained primarily on H800 chips, a less powerful export-compliant variant. Whether the new model required restricted hardware is a question that will be closely scrutinized.

The Open-Source Dimension

DeepSeek's approach has strengthened the open-source AI ecosystem in a way that benefits developers globally. Researchers have used DeepSeek's published architecture insights to build smaller, more efficient models — a proliferation effect that American labs' proprietary releases don't produce.

For businesses evaluating AI infrastructure, DeepSeek's continued competitiveness makes open-weight models an increasingly credible alternative to paying for API access from OpenAI or Anthropic. Self-hosted deployments eliminate per-query costs and keep data on-premises — two considerations that matter enormously to regulated industries like finance, healthcare, and government.

What to Watch

Expect DeepSeek to release a technical report within weeks of the model launch — the lab has been consistently transparent about its training methodology. That report will answer the critical question: how much compute did this take, and does it again undercut the capital intensity assumptions that U.S. lab valuations depend on?

The benchmark results will also land quickly. If the new model scores at or above GPT-5 or Claude 4 on standard evaluations, the efficiency argument becomes impossible to dismiss.

Did this help you understand AI better?

Your feedback helps us write more useful content.

Get tomorrow's AI briefing

Join readers who start their day with NexChron. Free, daily, no spam.